A sitemap helps search engines discover the important pages, images, and files on your website. But even a small spelling or formatting error in your sitemap can create crawling and indexing problems that quietly hurt SEO. Google treats sitemaps as a discovery signal, not a guarantee of indexing, so your sitemap needs to be accurate, valid, and regularly maintained to be useful. If you are dealing with a sitemap generator spellmistake, the issue may look minor at first. In reality, incorrect file names, broken XML tags, wrong URLs, mixed protocols, and outdated links can all reduce the value of your sitemap and delay search engine discovery.

This guide explains what a sitemap generator by spellmistake means, why it matters, how to fix it, and what best practices matter most now.

What Is a Sitemap Generator?

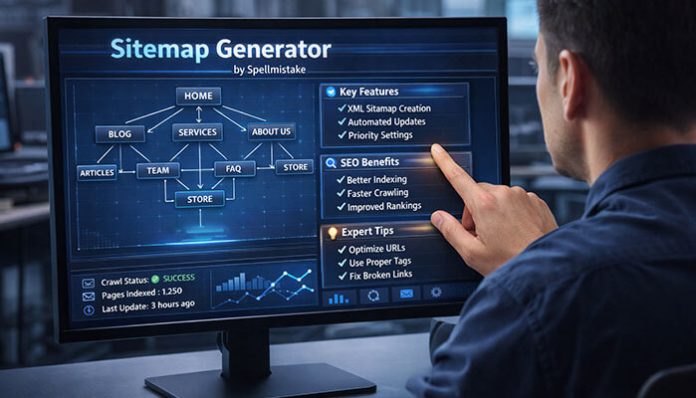

A sitemap generator is a tool, plugin, CMS feature, or crawler-based program that creates a sitemap for your website. In most cases, this is an XML sitemap that lists the URLs you want search engines to crawl more efficiently.

A sitemap can help search engines find pages they may not easily discover through normal crawling, especially on large sites, new sites, or sites with complex internal linking. However, submitting a sitemap does not guarantee that every listed page will be crawled or indexed.

Common sitemap formats include:

- XML sitemaps for search engines

- HTML sitemaps for users

- Image, video, and other specialized sitemap formats for certain content types

What Does “Sitemap Generator By Spellmistake” Mean?

The phrase sitemap generator by spellmistake refers to errors caused by spelling mistakes, syntax mistakes, bad formatting, or incorrect sitemap output during sitemap creation.

These mistakes can happen when:

- a plugin generates the wrong file or wrong URLs

- someone edits the sitemap manually

- an old sitemap is still being served from cache

- URLs were changed but the sitemap was not updated

- XML tags were typed incorrectly

- HTTP and HTTPS versions were mixed

Your original draft correctly emphasized that even small sitemap mistakes can quietly damage crawling and indexing if they are not caught early.

Why Sitemap Spellmistakes Are Dangerous for SEO

Sitemap mistakes are dangerous because search engines rely on clean, valid sitemap data to discover and prioritize URLs more efficiently. If the sitemap contains invalid XML, broken links, redirected pages, or the wrong canonical versions, search engines may ignore parts of it or waste crawl effort on low-value URLs. Google explicitly says that sitemaps help with discovery, but they do not override normal quality and indexing systems.

Common SEO problems caused by sitemap errors include:

- important pages being discovered more slowly

- crawl resources being wasted on broken or redirected URLs

- mixed indexing signals between canonical and non-canonical pages

- image or video content not being surfaced correctly

- incomplete coverage in Google Search Console or Bing Webmaster Tools

Common Sitemap Generator By Spellmistakes

1. Incorrect Sitemap File Name

One of the most common problems is using the wrong sitemap file name or wrong file path.

Examples:

sitmap.xmlsite-map.xmlsitemap.XML

The sitemap protocol itself is strict about structure, while search engines need the correct file URL to fetch the sitemap successfully. A wrong path or file name can stop discovery completely.

2. Misspelled URLs Inside the Sitemap

If a sitemap includes incorrect slugs, extra characters, missing hyphens, wrong capitalization, or outdated URLs, crawlers may hit redirects or 404 pages instead of the final destination.

Examples:

- wrong slug spellings

- extra folder names

- missing trailing path segments

- parameter-heavy URLs that should not be indexed

3. Invalid XML Tags

The Sitemap protocol requires proper XML structure. It must use valid XML tags, entity escaping, and UTF-8 encoding. Required elements like <urlset>, <url>, and <loc> must be correctly written. A typo such as <lock> instead of <loc> can break the file.

4. Wrong Protocol Usage

If your site runs on HTTPS but the sitemap still lists HTTP URLs, search engines may see inconsistent signals. It is better to list the preferred canonical version only. Google’s best-practice guidance also stresses using the right URLs and accurate sitemap entries.

5. Broken or Redirected URLs

A sitemap should contain final, live, indexable URLs, not 404 pages and not long redirect chains. Search engines can still discover redirected URLs, but listing the final destination is cleaner and more efficient.

6. Sitemap Generator Output Errors

Sometimes the issue is not manual editing at all. The sitemap generator itself may produce bad output due to plugin conflicts, cached versions, migration issues, or outdated configuration. Your original draft included this as a separate troubleshooting point, and it is worth keeping because many real-world sitemap errors come from tools, not just human typos.

Why These Errors Often Go Unnoticed

One reason sitemap problems are so harmful is that they often do not break the website visually. Your site may look perfectly fine to users while search engines are dealing with:

- invalid sitemap syntax

- old cached sitemap files

- bad discovery paths

- incorrect robots.txt references

- outdated URL structures

That is why sitemap issues can reduce SEO performance quietly over time instead of causing one obvious technical failure.

How to Identify Sitemap Generator By Spellmistakes

Check Google Search Console

Google Search Console is one of the best places to detect sitemap issues. It can show whether Google could fetch the sitemap and whether there are processing problems. Google has long recommended Search Console as the preferred way to submit sitemaps because it gives direct feedback about retrieval and recognition.

Look for issues such as:

- couldn’t fetch

- invalid URL

- invalid XML

- submitted URLs not selected for indexing

Validate Your Sitemap

The Sitemap protocol requires correct XML formatting, entity escaping, and UTF-8 encoding. A validator or XML parser can catch broken tags and malformed structure before search engines do.

Manual URL Spot Check

Open a few sample URLs from the sitemap and verify that they:

- load properly

- return a 200 status

- use the preferred HTTPS version

- match the final canonical page

- are not blocked or noindexed

This manual check was also a strong point in your original draft and should stay in the article.

How to Fix Sitemap Generator By Spellmistakes

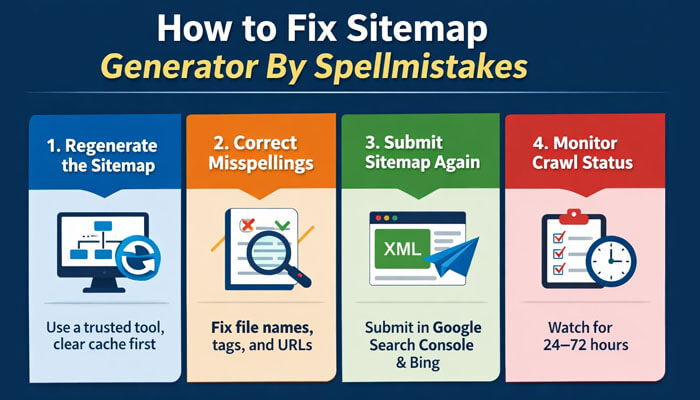

1. Regenerate the Sitemap

The safest fix is often to regenerate the sitemap using a trusted tool, plugin, or CMS feature. If the existing output was cached, clear the cache before regeneration so the corrected sitemap is the one actually served. Your original article specifically mentioned clearing cache before regeneration, and that is a practical point worth preserving.

2. Correct Misspellings Manually

If the sitemap is managed manually, review:

- file name

- sitemap path

- XML tags

- URL spelling

- canonical URL versions

- HTTPS consistency

- broken and redirected links

3. Submit Sitemap Again

After fixing the sitemap, submit it again through Google Search Console and Bing Webmaster Tools. Google has deprecated the old sitemap ping endpoint, so direct submission through webmaster tools and sitemap discovery through robots.txt are the better methods now.

4. Monitor Crawl Status for 24–72 Hours

Your original draft included a useful operational note: after resubmitting the sitemap, monitor crawl and processing status for the next 24 to 72 hours. That is a practical workflow tip for site owners because sitemap processing and updated reporting are not always instant.

Sitemap Location and robots.txt

This is often overlooked.

Search engines may discover your sitemap through the robots.txt file, so if the sitemap URL is missing or misspelled there, discovery can be delayed.

Use a clear reference like:

The sitemap protocol supports informing crawlers about sitemap location, and Bing also recommends submitting and managing sitemaps through Webmaster Tools.

Common Problems and Solutions

| Problem | Cause | Solution |

|---|---|---|

| Sitemap couldn’t be fetched | Wrong file name or wrong URL | Rename correctly to sitemap.xml and ensure public access |

| Invalid XML format | Misspelled or broken tags | Validate XML and fix incorrect tags |

| URLs not indexed | Noindex or blocked pages | Remove blocked or noindex URLs |

| HTTP URLs in sitemap | Mixed protocol usage | Update sitemap to HTTPS only |

| Too many 404 pages | Deleted or incorrect URLs | Remove broken URLs and regenerate sitemap |

| Redirected URLs included | Old URL structure | Replace with final destination URLs |

| Sitemap generator by spellmistake | Plugin issue or manual typo | Regenerate sitemap and recheck output |

This table preserves the missing troubleshooting row from your original draft in a cleaner way.

Best Practices to Avoid Sitemap Errors

To keep your sitemap accurate and SEO-friendly:

- use one canonical sitemap file

- keep URLs lowercase and consistent

- update the sitemap after major content changes

- avoid including noindex or blocked pages

- test the sitemap after every update

- include only final, indexable URLs

- make sure sitemap URLs match canonical URLs exactly

- use HTTPS consistently across the entire sitemap

These points combine your original draft’s best-practice section with current official guidance around valid, high-quality sitemaps.

These practices align with official sitemap standards and modern webmaster guidance from Google and Bing.

Image and Video Sitemaps

Large or media-heavy websites should pay attention to image and video discovery. Specialized sitemap support can help search engines better understand media assets, and malformed markup can reduce visibility in image or video surfaces. The protocol is still strict, so spelling and formatting mistakes matter here too.

If your site relies heavily on visual content:

- use image and video sitemap support where relevant

- confirm media URLs are crawlable

- avoid broken file paths

- keep metadata clean and up to date

Bing and Other Search Engines

Google is not the only platform that uses sitemap data. Bing Webmaster Tools also supports sitemap submission and monitoring, and Bing has highlighted that sitemaps remain important for discoverability across Bing search experiences, including AI-powered search. Bing also says it typically revisits sitemaps regularly after submission or robots.txt discovery.

That means a clean sitemap can support broader search visibility beyond Google alone.

Recommended Sitemap Generator Tools

Popular options include:

- Yoast SEO

- Rank Math

- Screaming Frog

- XML-Sitemaps.com

No matter which tool you use, always review the final output. Your original draft was right to include that warning. Automated generation reduces manual mistakes, but it does not eliminate them.

Sitemap Generator By Spellmistake vs Optimized Sitemap

| Factor | Spellmistake-Prone Sitemap | Optimized Sitemap |

|---|---|---|

| File naming | Incorrect or inconsistent | Standard and clear |

| XML structure | Broken or misspelled tags | Valid XML structure |

| URL accuracy | Typos, redirects, wrong slugs | Clean canonical URLs |

| Crawl efficiency | Low, with wasted crawl budget | Higher and cleaner discovery |

| Indexing success | Partial or delayed | More reliable processing |

| SEO impact | Silent technical weakness | Stronger SEO foundation |

| Maintenance | Frequent manual fixes | Automated and monitored |

This keeps the comparison idea from your original article while making it easier to scan.

Practical 2026 Sitemap Priorities

Instead of making hard predictions, it is smarter to frame 2026 sitemap strategy around practical priorities that already match current search guidance.

AI-Driven Discovery Still Rewards Clean Structure

Search engines are becoming better at understanding site structure, but that makes clean technical signals more valuable, not less. A valid sitemap helps reinforce URL organization and content discovery, especially on larger or more complex sites. Bing has explicitly connected sitemaps to discoverability in AI-powered search.

Index-Only Sitemaps Matter More

Only include URLs that deserve indexing. Low-value, blocked, duplicate, and parameter-heavy pages dilute sitemap quality. Google’s long-standing sitemap best practices support keeping sitemap entries focused on useful, crawl-worthy URLs.

Frequent Sitemap Validation Is Smart

For content-heavy sites, regular audits are worth doing. Your original draft suggested monthly checks, and that is a sensible maintenance habit for active sites, especially after migrations, URL changes, or plugin updates.

JavaScript and Dynamic URLs Need Extra Care

If your site depends heavily on JavaScript, the URLs listed in the sitemap should still resolve cleanly and reflect the preferred crawlable version of the page. Google’s JavaScript SEO guidance makes clear that JavaScript sites need careful handling for crawlability and discoverability.

Performance and Quality Still Matter

A sitemap does not bypass quality systems. Pages listed in a sitemap should still be useful, accessible, and technically sound. A sitemap helps discovery, but it cannot compensate for weak page quality or poor technical health.

Zero-Error Technical SEO Is the Goal

This does not mean perfection is always possible, but it does mean that small technical issues deserve attention. Misspelled XML tags, stale URLs, broken paths, and duplicate sitemap versions are easy problems to fix and not worth leaving unresolved.

FAQs

1. Can a single spelling error break a sitemap?

Yes. One incorrect XML tag, file name, or URL can damage sitemap validity or make the file much less useful. The Sitemap protocol requires valid XML structure and correct required elements.

2. What is the most common sitemap generator by spellmistake?

Wrong file names, misspelled XML tags, incorrect URL slugs, and protocol mismatches are among the most common problems. Your original article especially highlighted wrong names like sitmap.xml and broken <loc> tags.

3. Do sitemap spelling errors affect SEO rankings?

Not as a direct ranking factor by themselves, but they can affect crawling, discovery, and indexing efficiency, which can reduce organic visibility over time. Google states that sitemaps are for discovery rather than direct ranking boosts.

4. How can I quickly detect sitemap spellmistakes?

Check the sitemap in Google Search Console, validate the XML, and manually test sample URLs from the file.

5. Should I resubmit my sitemap after fixing errors?

Yes. After correcting errors, resubmit the sitemap through Google Search Console or Bing Webmaster Tools and review the status reports afterward.

Conclusion

A sitemap generator by spellmistake may look like a small technical issue, but it can create bigger SEO problems than many site owners realize. A wrong file name, broken XML tag, incorrect URL, outdated cached file, or bad robots.txt reference can reduce the value of your sitemap and slow down discovery.

The good news is that sitemap mistakes are usually fixable. By validating XML, clearing cache before regeneration, keeping URLs lowercase and consistent, listing only clean canonical URLs, and monitoring your sitemap after resubmission, you can keep your technical SEO much stronger.

As search engines continue improving discovery systems, clean and accurate sitemap management will remain one of the easiest technical SEO wins you can control.