Over the past year, both Ahrefs and Semrush have shipped AI visibility features. Ahrefs launched Brand Radar. Semrush rolled out AI Visibility Toolkit. The narrative writes itself: The incumbents are catching up, the gap is closing, maybe you don’t need a dedicated AEO tool after all.

That narrative is wrong. Or at least, incomplete.

SEO tools were built around keywords, rankings, and backlinks. Their AI features are layered on top of that architecture, which means they inherit its assumptions. Purpose-built AEO tools were designed from the ground up around prompts, AI crawlers, and how large language models consume and cite content. That’s a fundamentally different problem space.

This article walks through nine specific problems where AEO tools go further than SEO tools, even the ones with new AI features. To make the comparison concrete, we’ll use Scrunch as the AEO benchmark throughout: a purpose-built AEO platform trusted by enterprise brands like Lenovo, Akamai, and ADP. Analysis draws on hands-on product testing, public product documentation, third-party reviews, and independent accuracy studies.

1. Websites that AI agents can’t reliably parse

Most modern websites are built for humans: heavy JavaScript, dynamic components, progressive rendering. AI bots often can’t parse that content reliably, which means pages optimized for human conversion can be effectively invisible to the AI platforms increasingly driving discovery.

The catch is that you can’t rebuild your website for AI without risking the human experience that drives revenue. You need to give AI what it needs without breaking anything that works.

Neither Ahrefs nor Semrush addresses this. They’ll tell you there’s a crawlability problem but won’t fix it.

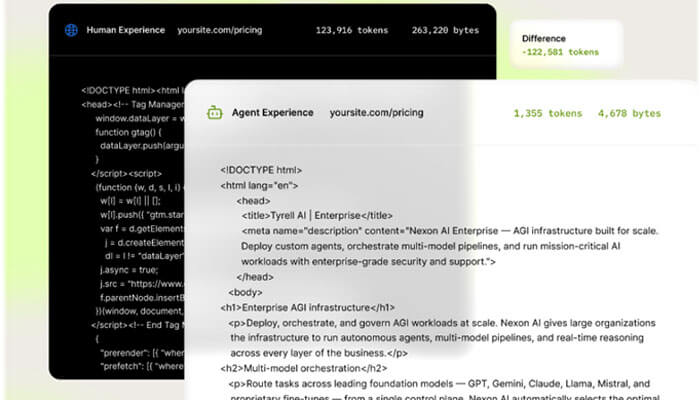

A small number of AEO platforms are building content delivery infrastructure to solve it. Scrunch’s Agent Experience Platform (AXP) works at the CDN level (Akamai, Cloudflare, and Vercel) and intercepts AI bot traffic before it reaches your JavaScript-heavy frontend.

Scrunch’s AXP makes pages consumable for AI agents

AXP serves a parallel, AI-optimized version of each page: clean, server-rendered HTML, structured summaries, clarified entity definitions. Human visitors see the unchanged site. AI agents get content they can actually parse and cite.

2. Site audits that miss AI-specific crawl and parsing issues

The technical issues that hurt AI visibility aren’t always the same ones that hurt Google rankings. JavaScript rendering failures, access rules blocking AI user agents, content structured for humans rather than answer extraction. None of these reliably show up as problems in a traditional SEO audit.

That matters because you can’t optimize content for AI if AI can’t access or parse it in the first place. Auditing for AI readability is the upstream step that makes every other optimization effort work.

Ahrefs and Semrush site audits are built around Googlebot behavior and Google’s ranking signals. They don’t diagnose AI crawlability, AI-readable content structure, or the JavaScript issues that specifically break AI parsing.

Dedicated AEO tools audit for AI consumption directly. Scrunch’s Site Maps renders your full website as a visual tree, with every page showing Audit Score, Agent Traffic, Citations, and AI Referrals at a glance.

Its Deep AI Audit goes further, diagnosing JavaScript rendering failures, metadata gaps, robots.txt rules blocking AI bots, and content structure issues that prevent answer extraction. It’s purpose-built for the AI-specific crawl and parsing problems that Ahrefs and Semrush audits won’t find.

3. No visibility into AI bot activity on your site

GPTBot, ClaudeBot, PerplexityBot, and a growing fleet of AI user agents are crawling sites right now to build context for AI answers. If you can’t see which bots are hitting which pages, or whether they’re hitting your site at all, you have no way to diagnose why AI platforms aren’t citing you.

AI bot activity is a leading indicator of visibility. A page AI crawlers can’t access is a page AI will never cite.

Ahrefs CMO Tim Soulo has publicly confirmed that Brand Radar doesn’t track AI crawler activity. Semrush’s toolkit focuses on AI response measurement, not server-side bot visibility. Neither tool tells you what’s happening at the infrastructure level.

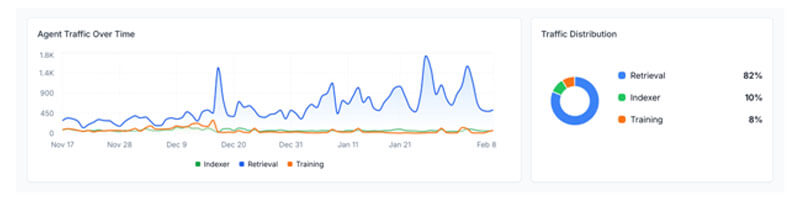

Scrunch’s Agent Traffic tab tracks this directly. You can see which bots are crawling your site, how often, which pages they’re hitting, and how that activity breaks down across three bot types: retrieval bots (fetching content for live user prompts), indexer bots (building their own search index), and training bots (scraping for model improvement).

Retrieval traffic is the one to watch most closely. It’s a real-time signal that a human somewhere just prompted an AI about something related to your brand.

A Scrunch dashboard displaying three types of bots visiting a site

The dashboard also shows Top Bot Agents (which AI platforms are most active on your site), Top Content Pages (which pages retrieval bots hit most), and a chronological log of recent bot requests with timestamps, bot type, page path, and request status.

Ahrefs and Semrush show you what AI said about you. Scrunch shows you whether AI can even find you.

4. Visibility gaps surfaced without a path to close them

A dashboard can tell you that you appear in 23% of AI responses while a competitor appears in 67%. That number is useful. What to do about it — which pages to fix, which content to create, in what order — is a different problem entirely.

Most teams don’t have time for weeks of manual cross-referencing between prompt data, citation data, and content inventories. The tools that win are the ones that turn diagnosis into action.

Ahrefs and Semrush surface gaps and flag opportunities, but the optimization work is entirely on you. There’s no path from “you’re losing here” to “here’s the fixed page.” Neither platform detects when a site is simply missing content for the queries that matter.

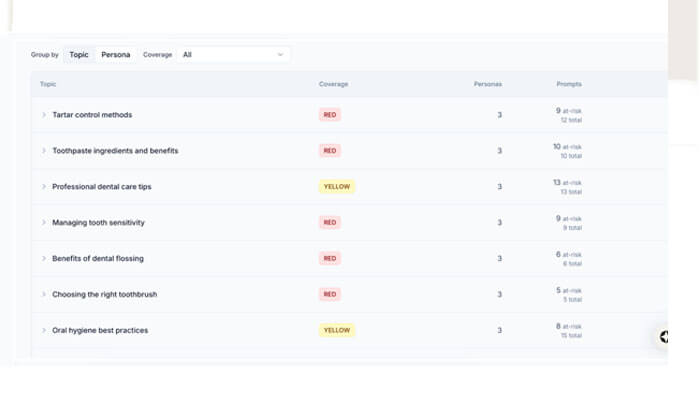

Scrunch addresses this in two ways. Content Gaps auto-detects when your site is missing content for tracked prompts and surfaces those blind spots directly on the home screen. Every gap is classified as Red (no content found), Yellow (limited content found), or Green (sufficient content found), with a count of at-risk prompts.

Teams can drill into any gap to see associated prompts and matching pages, filterable by topic or persona. The analysis updates automatically as new content is detected, removing resolved gaps as they’re addressed.

A list of prompts with coverage-level classification

For pages that exist but underperform, Scrunch’s Optimizer generates a customized plan based on page intent, target persona, and tracked prompts. Scrunch can also auto-generate an AI-optimized version of that page and push it directly to AI agents via AXP. The gap between “we have a problem” and “the problem is fixed” collapses from weeks to hours.

5. Inaccurate brand mention counts in AI responses

There have been reports of accuracy problems with SEO tools that have added AI visibility features. In one instance, Ahrefs Brand Radar was found to report just three ChatGPT mentions for a brand when the true number was significantly higher. When the underlying count is wrong, every decision downstream is built on distorted data: content strategy, competitive benchmarking, board reporting.

That matters more in AI visibility than almost anywhere else. Teams are still building the internal credibility to get headcount and budget behind this discipline. A dashboard that gets contradicted in the first board review doesn’t survive the second one.

Ahrefs Brand Radar uses a snapshot-based methodology that samples AI responses at scheduled intervals. This approach has been linked to significant undercounting on ChatGPT and Perplexity. Semrush relies partly on synthetic prompts generated from domain and location rather than sampling live responses at depth.

Purpose-built AEO platforms collect actual AI responses and check them for brand mentions directly. Scrunch treats brand presence as a binary measure (mentioned or not), applies regex pattern matching across live responses, and aggregates across prompt variants over 90-day windows to smooth daily fluctuations into reliable trend lines. The methodology is designed to produce numbers you can defend in a room.

6. Incomplete coverage across AI platforms

Your audience isn’t using one AI tool. Some people live in ChatGPT. Others default to Perplexity, Claude, Gemini, or Google’s AI Mode. A visibility strategy that only covers three or four platforms has blind spots by design.

Brand presence varies dramatically across platforms. A brand winning in ChatGPT can be invisible in Perplexity. Without coverage that matches where your audience actually searches, you’ll optimize for the platforms you can see and lose ground in the ones you can’t.

Ahrefs Brand Radar tracks six AI surfaces: ChatGPT, Perplexity, Gemini, Copilot, AI Overviews, and AI Mode. Semrush tracks five: ChatGPT, Gemini, Perplexity, AI Overviews, and AI Mode. Both miss Claude and Meta AI entirely.

Dedicated AEO platforms invest in integrations across every major AI surface and expand coverage as new platforms emerge. Scrunch tracks nine: ChatGPT, Claude, Perplexity, Gemini, Google AI Mode, Google AI Overviews, Microsoft Copilot, Meta AI, and Grok.

7. Citation data without influence weighting

A citation count alone doesn’t tell you which sources are actually shaping AI answers. A domain cited in more responses across more unique prompts is dramatically more influential, but most citation reports treat them as equivalent data points.

That distinction matters if you’re investing in digital PR or contributed articles to earn placements in cited sources. Chasing low-influence citations is wasted effort. You need to know which sources are high-leverage before you spend anything.

Ahrefs reports cited domains and cited pages but stops at volume. Semrush surfaces competitor and source mentions but doesn’t weight them by cross-prompt influence. Neither tool helps you prioritize where to focus.

Scrunch assigns every cited source an Influence Score, calculated by multiplying the percentage of responses that cited the source by the number of unique prompts it appeared in. A source cited frequently but narrowly scores differently than one cited consistently across a wide range of prompts.

Scrunch also runs automated gap analysis, flagging prompts where competitors are mentioned but your brand isn’t. You can see exactly where influence is concentrated and where you’re being shut out.

8. Prompt monitoring that lacks enterprise-grade scale and filtering

Enterprises need to track thousands of prompts across multiple personas, regions, languages, funnel stages, and brand lines. A tool that caps custom prompts or offers flat filtering can’t support the granularity enterprise teams actually need.

Aggregate visibility numbers hide the performance patterns that matter. A brand might look strong overall while losing badly for its top-revenue persona or in its highest-growth market. Without real filtering, those patterns stay invisible.

Semrush caps custom prompts at 50 on Starter, 100 on Pro+, and 200 on Advanced, with add-ons priced at $60 per 50 additional prompts. Ahrefs offers broader volumes but limited native filtering by persona, funnel stage, or region.

Scrunch’s Enterprise plan supports fully custom prompt volumes. Users can create personas with unique geographies, auto-generate prompts tied to those personas, and filter all data by persona, country, language, topic, funnel stage, branded vs. non-branded, and custom tags. The filtering structure maps to how marketing organizations are actually built, not how the tool was easiest to design.

9. AI visibility tooling that doesn’t meet enterprise security standards

Enterprise buyers need more than a working product. They need SOC 2 Type II certification, SSO, role-based access control, audit logs, regional compliance (GDPR, CCPA), and the ability to manage multiple brands and regions from a single workspace. Without these, AI visibility tooling doesn’t make it through procurement.

That bar is only getting higher. AI visibility is becoming a board-level metric, which means the tools behind it need to hold up in regulated industries like financial services and healthcare. Your CISO has to sign off on this.

Ahrefs and Semrush meet baseline enterprise security requirements for their core SEO products, but their AI visibility features are layered into the same broad platform. They don’t offer AI-specific enterprise workflows like multi-brand AI workspaces or governance scoped to AI data.

Scrunch is built for this from the ground up: SOC 2 Type II compliant, SAML/OAuth SSO, RBAC, GDPR and CCPA compliance, audit logs, guaranteed SLAs, multi-brand workspaces, and multi-region and language support. Enterprise customers, including Lenovo, Akamai, and ADP, use it to monitor millions of prompts and hundreds of thousands of pages every week. Security and governance are part of the architecture.

From measurement to action

The through-line across all nine problems is the same. SEO tools, even with new AI features, are measurement platforms built on a keyword-and-ranking data model. They tell you what’s happening. They don’t fix it, deliver optimized content to AI agents, diagnose why crawlers can’t parse your site, or weight citation influence by cross-prompt reach. They weren’t built to. AEO tools go further because they were built prompt-first for a different problem.

The decision isn’t complicated. If your team only needs baseline AI mention tracking alongside existing SEO work, Ahrefs Brand Radar or Semrush’s AI Visibility Toolkit can cover that. If you’re treating AI visibility as a strategic channel — with real optimization work, enterprise governance, and accountability for business outcomes — the tooling needs to go deeper than a dashboard.

Use these nine problems as your evaluation framework when assessing the category. The right tool is the one that can actually solve them.