Search engine optimization relies heavily on technical website configuration. One of the most important yet often overlooked technical files is the robots.txt file. Even a small mistake in this file — such as a spelling error, incorrect syntax, or formatting issue — can prevent search engines from properly crawling a website. In many cases, a generate robots.txt files spellmistake can occur when directives are typed incorrectly or when automated tools produce invalid syntax.

Many website owners try to generate robots.txt using a robots.txt generator, robots file generator, or robots generator tool to automate the process. Tools like a robots txt generator, robots.txt file generator, or online robots txt generator can simplify the process, but configuration errors can still occur if the generated file is not reviewed carefully.

These robots.txt configuration mistakes may block important pages from search engines or cause indexing problems.

Understanding how to create a robots.txt file, avoid robots.txt syntax errors, and properly configure crawling rules is essential for maintaining strong technical SEO.

In this guide, we explore:

- What robots.txt files are

- Why spelling mistakes in robots.txt are dangerous

- How to generate robots.txt files correctly

- Robots.txt generators and automation tools

- Common robots.txt spelling mistakes

- Robots.txt syntax rules

- Robots.txt vs meta robots tags

- Robots.txt testing tools

- Crawl budget optimization

- Robots.txt for WordPress websites

- Advanced robots.txt directives

- Security misconceptions

- SEO best practices checklist

This comprehensive guide helps bloggers, developers, and SEO professionals correctly create robots.txt files and avoid mistakes that could harm search rankings.

What Is a Robots.txt File?

A robots.txt file is a plain text document placed in the root directory of a website that instructs search engine bots about which pages they should or should not crawl.

Search engines such as Google and Bing use automated programs called web crawlers or spiders to discover and index web pages. Before crawling a site, these bots typically check the robots.txt file to understand which areas of the site are accessible.

Website owners commonly use robots.txt to prevent crawlers from accessing:

- admin panels

- login pages

- internal search results

- duplicate content directories

- staging environments

A basic robots.txt file looks like this:

Disallow: /admin/

Allow: /

Sitemap: https://example.com/sitemap.xml

When properly configured, robots.txt helps search engines crawl websites efficiently and follow correct robots.txt crawling rules.

How to Generate Robots.txt File Correctly (Step-by-Step Guide)

Learning how to generate robots.txt correctly is important for avoiding configuration mistakes and syntax errors. Many technical SEO issues arise from a generate robots.txt files spellmistake, where directives are misspelled or incorrectly structured.

Whether you manually create robots.txt or use a robots generator, the file must follow strict syntax rules defined by the Robots Exclusion Protocol.

Step 1: Create a Plain Text File

Open a text editor such as:

- Notepad

- VS Code

- Sublime Text

Save the file with the name:

This allows you to manually create robots.txt file for your website.

Step 2: Define the User-Agent

The User-agent directive specifies which crawler the rules apply to.

Example:

The wildcard * means the rule applies to all search engine bots.

Step 3: Add Disallow Rules

Use the Disallow directive to block specific directories or pages.

Example:

Disallow: /private/

The Disallow rule instructs search engines not to crawl those paths.

Step 4: Add Sitemap URL

Including a sitemap helps search engines discover important pages faster.

Example:

Step 5: Upload the File to the Root Directory

Upload the robots.txt file so it is accessible at:

Search engine crawlers automatically look for robots.txt at the root directory.

Robots.txt Generators and Robots.txt File Generator Tools

Many website owners prefer using a robots txt generator or robots.txt generator to automatically build the file.

A robots.txt file generator, robots file generator, or robot txt file generator allows users to select which pages should be blocked or allowed without manually writing the syntax.

Examples include:

- technical SEO platforms

- CMS plugins

- free robot txt generator tools

- create robots txt online platforms

- online robots txt generator services

These tools function as a robots generator, automatically generating crawling rules that follow correct syntax. However, if the configuration is not checked carefully, a generate robots.txt files spellmistake can still occur.

Using a free robot txt generator or online robots txt generator can help beginners avoid syntax errors while learning how to configure robots.txt correctly.

Where the Robots.txt File Should Be Located

The robots.txt file must always be located in the root directory of the website.

Correct location:

Incorrect locations include:

https://example.com/files/robots.txt

Search engine bots check the root directory for robots.txt instructions before crawling other pages.

Why Robots.txt Is Important for SEO

The robots.txt file acts as a guide for search engine crawlers.

Proper configuration helps SEO in several ways.

Crawl Budget Optimization

Search engines allocate a limited crawl budget for each website. Robots.txt helps crawlers focus on important pages rather than wasting resources on unnecessary ones.

Prevent Indexing of Sensitive Pages

Admin panels, login pages, or private directories should not appear in search results.

Improved Website Crawling

A well-structured robots.txt file helps search engines navigate the site architecture more efficiently.

Why Spelling Matters in Robots.txt Files

The robots.txt file must be named exactly as “robots.txt” and placed in the root directory of your domain. Search engines only recognize this exact format, and any variation will cause the file to be ignored.

Even a minor spelling error can render the file invisible to search engine crawlers. In such cases, search engines assume there are no restrictions on crawling, which can lead to unintended consequences.

Correct vs Incorrect Robots.txt File Names

| Correct File Name | Incorrect Versions |

|---|---|

| robots.txt | robot.txt |

| robots.txt | robots.text |

| robots.txt | robots.txt.txt |

| robots.txt | robots.html |

Consequences of Naming Errors:

- Search engines ignore your crawl rules.

- Sensitive pages may get indexed unintentionally.

- Crawl budget may be wasted on irrelevant pages.

Ensuring the correct spelling and placement of your robots.txt file is crucial for proper crawling and indexing control.

Real SEO Problem Caused by Robots.txt Mistakes

Many websites have accidentally blocked their entire site due to incorrect robots.txt configuration.

Example:

Disallow: /

This rule prevents search engines from crawling any page on the website.

If such a rule is accidentally deployed on a live site, it can cause severe ranking and indexing issues.

This is why robots.txt files should always be tested before publishing.

What Happens When Robots.txt Files Have Spelling Mistakes?

Robots.txt files follow strict syntax rules.

If directives are misspelled or incorrectly formatted, search engines may ignore them.

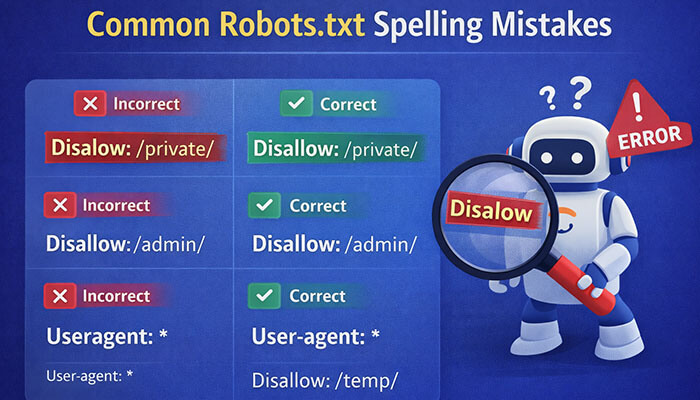

Example:

- Incorrect robots.txt

Disalow: /private/

- Correct version

Disallow: /private/

These robots.txt syntax errors prevent search engines from interpreting the crawling instructions correctly.

Common Spelling Mistakes When Generating Robots.txt

Many website owners create robots.txt files manually or through SEO tools, and this is where spelling mistakes can frequently happen. Here are some common errors:

1. Missing the Letter “S”

A common mistake is naming the file “robot.txt” instead of “robots.txt.” Search engines will ignore the file if it’s named incorrectly.

2. Incorrect File Extension

The robots.txt file must always be saved with the .txt extension. Common errors include:

- robots.txt.txt

- robots.doc

- robots.html

3. Wrong File Location

Some users upload the robots.txt file into a subfolder rather than placing it in the root directory.

Incorrect locations:

- /files/robots.txt

- /seo/robots.txt

Correct location: - yourdomain.com/robots.txt

4. Automatic Generation Errors

Some CMS platforms automatically generate robots.txt files, but they may include:

- Unnecessary blocking rules

- Incorrect directories

- Outdated settings

Always review and update auto-generated files before publishing to ensure they meet your needs.

Avoiding these common mistakes ensures that search engines can properly read and follow your crawling instructions.

Robots.txt Syntax Rules

| Directive | Purpose |

|---|---|

| User-agent | Specifies which crawler the rule applies to |

| Disallow | Prevents crawlers from accessing specific URLs |

| Allow | Permits crawling of specific pages |

| Sitemap | Provides the location of the sitemap |

Example:

Disallow: /private/

Allow: /blog/

Sitemap: https://example.com/sitemap.xml

Robots.txt vs Meta Robots Tag

| Feature | Robots.txt | Meta Robots Tag |

|---|---|---|

| Location | Root directory | Inside HTML page |

| Controls crawling | Yes | No |

| Controls indexing | Limited | Yes |

Robots.txt controls whether bots can crawl a page, while meta robots tags control whether the page should appear in search results.

Robots.txt Validation and Testing Tools

Before publishing a robots.txt file, it should always be tested.

Common tools include:

- Google Search Console robots.txt tester

- technical SEO auditing platforms

- robots.txt validators

Testing helps detect:

- spelling mistakes

- syntax errors

- blocked pages

- crawler access issues

Robots.txt and Crawl Budget Optimization

Search engines allocate a limited crawl budget for each site.

Robots.txt helps prevent crawlers from wasting resources on:

- Filtered URLs

- Duplicate content

- Admin dashboards

- Internal search pages

This improves technical SEO performance, crawl efficiency, and helps search engines prioritize important website content.

Robots.txt for WordPress Websites

WordPress automatically generates a basic robots.txt configuration.

Example:

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.phpSitemap: https://example.com/sitemap.xml

Advanced Robots.txt Directives

Blocking Specific Bots

Disallow: /

Blocking File Types

Advanced rules help manage crawler behavior on large websites.

Robots.txt Security Misconceptions

Robots.txt is not a security tool.

The file only provides instructions for bots. It cannot prevent users from accessing restricted URLs directly, and malicious crawlers may ignore it.

Sensitive content should be protected using:

- Authentication systems

- Password protection

- Server security controls

Robots.txt SEO Checklist

Before publishing your robots.txt file:

✔ file name is robots.txt

✔ file is in the root directory

✔ syntax rules are correct

✔ no spelling mistakes exist

✔ important pages are not blocked

✔ sitemap URL is included

✔ file has been tested

Conclusion

Robots.txt files play a crucial role in technical SEO and website crawling management. However, even small configuration errors or a generate robots.txt files spellmistake can cause serious indexing problems and prevent search engines from accessing important pages.

Whether you manually create robots.txt or use a robots.txt generator, robots txt generator, or online robots txt generator, it is important to verify the rules carefully.

Using tools such as a free robot txt generator, robots.txt file generator, or create robots txt online platform can simplify the process, but testing and validation remain essential.

A properly configured robots.txt file ensures search engines crawl your website efficiently, protects sensitive pages, and improves overall SEO performance.

Generate Robots.txt Files Spellmistake FAQs

1. What is a robots.txt file, and why is it important for SEO?

The robots.txt file is a simple text document that helps search engine bots understand which pages on your website they can and cannot crawl. Proper configuration of this file is crucial for SEO as it prevents unnecessary crawling and ensures efficient indexing of your site’s important pages.

2. How do spelling mistakes in robots.txt files affect SEO?

Even minor spelling errors in the robots.txt file, such as missing letters or incorrect file extensions, can cause search engines to ignore the file entirely, leading to unintended crawling of sensitive pages and inefficient use of crawl budgets, ultimately affecting your website’s SEO performance.

3. How can I correctly generate a robots.txt file for my website?

To generate a robots.txt file correctly, ensure it is named “robots.txt” and placed in the root directory. The file should include directives like “User-agent,” “Disallow,” and “Sitemap,” with each rule clearly specifying which pages are to be crawled or blocked. Tools like robots.txt generators can simplify the process, but always double-check the generated content for accuracy.

4. What are some common robots.txt syntax mistakes, and how can I avoid them?

Common syntax mistakes include using the wrong file extensions, placing the file in incorrect directories, and misconfiguring crawl directives. To avoid these errors, always save the file as “robots.txt,” place it in the root directory, and verify the syntax using robots.txt validation tools before publishing it.

5. Can robots.txt files help optimize crawl budgets for large websites?

Yes, by blocking irrelevant pages, such as admin dashboards and duplicate content, a properly configured robots.txt file helps search engines focus on crawling important pages, thus improving crawl efficiency and optimizing your website’s crawl budget for better SEO results.